Scanning Is Not Knowing

Your AI has a million-token context window. It still forgets what it learned yesterday.

Scanning Is Not Knowing

Your AI model has a context window measured in millions of tokens. It can scan an entire codebase in one pass. It can hold a two-hour conversation without losing the thread.

It also has no idea what it learned yesterday.

Familiar Ground

The context window race is the loudest conversation in AI. Every model release announces a bigger number. One hundred thousand tokens. One million. Two million. The implicit promise: a bigger window means a smarter model. If your AI could just see more, it would know more.

You experience the opposite daily. You start a new session and spend the first thirty minutes re-explaining what the model already learned in the last session. Your coding standards. Your project architecture. Your naming conventions. Your preferences. You have typed the same context into different sessions dozens of times. Each session starts cold.

A practitioner documented this pain and the framing spread to 212,000 views. The resonance tells you something: this is not a personal workflow problem. It is a structural gap in how the industry thinks about AI memory.

Counter-Signal

The industry is optimising the wrong variable.

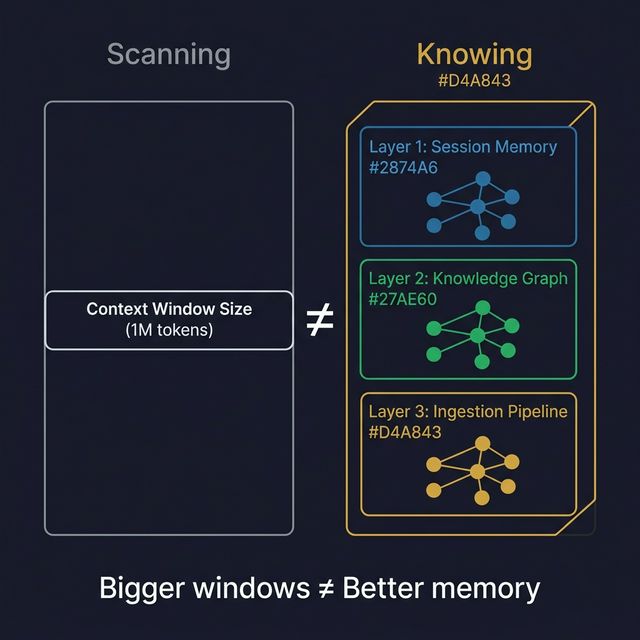

Context window size determines how much an AI can scan in a single session. It does not determine how much it knows. Cognitive science has known this distinction for decades. Nelson Cowan’s research established that human working memory holds roughly four chunks, regardless of how much information is available. Having access to a library does not mean you have read the library.

The same principle applies to AI. A million-token context window means the model can scan a million tokens. It does not mean it understands or retains any of them between sessions. The window is a scanner, not a memory. When the session ends, everything disappears.

Teams lose an estimated thirty minutes per session rebuilding context. That is a full workday per week spent telling the AI what it already should know. You are not paying for intelligence. You are paying for amnesia.

⚛️ The Fusion

Three ideas crash here, and the collision reveals a missing layer in every AI deployment.

Cowan’s 4-chunk limit establishes the cognitive science foundation. Working memory, whether human or artificial, is not about volume. It is about structure. You do not become smarter by seeing more information. You become smarter by organising information into retrievable structures. A million-token context window is more working memory, but it is still working memory: volatile, session-scoped, gone when you close the tab.

The 3-layer memory architecture maps the solution. A complete AI memory system has three layers, and most deployments have only one:

| Layer | Function | Human Analogy | What Most Teams Have |

|---|---|---|---|

| 1 | Session Memory | Working memory, today’s focus | CLAUDE.md, system prompts ✅ |

| 2 | Knowledge Graph | Long-term memory, structured recall | Persistent cross-referenced knowledge ❌ |

| 3 | Ingestion Pipeline | Learning, new information absorption | Systematic external signal capture ❌ |

Layer 1 is a file that tells the model who it is and what to do right now. Most teams have this. Layer 2 is a persistent knowledge base the model can query across sessions, retrieving what it learned last week or last month. Almost nobody has this. Layer 3 is a structured process for capturing new external information and converting it into knowledge the model can use later. Virtually nobody has this.

What if you could see your AI not as a chat window with a bigger scanner, but as an intelligence system wearing an “exosuit” of accumulated knowledge?

Context amnesia is the organisational failure mode that results from having Layer 1 without Layers 2 and 3. The model starts every session from scratch. The knowledge it produced yesterday is not available today. The patterns it recognised last week are invisible this week. Each session is brilliant and isolated. Output plateaus instead of compounding.

The metaphor is precise: context amnesia is to AI systems what knowledge loss is to organisations with high turnover. The person leaves. The knowledge leaves with them. The next person starts from zero.

| Scanning (Context Window) | Knowing (Memory System) |

|---|---|

| Volatile: gone when session ends | Persistent: survives across sessions |

| Flat: all information at same depth | Structured: layers with different retrieval patterns |

| Bigger is the only improvement axis | Deeper is the improvement axis |

| Session starts cold every time | Session starts warm with accumulated context |

| Output plateaus after initial gains | Output compounds over time |

| Measures capacity (tokens) | Measures depth (structured knowledge) |

The New Pattern

The diagnostic question for any AI deployment: how many of the three memory layers do you have?

Layer 1 (session memory) is table stakes. A system prompt, a CLAUDE.md file, a set of instructions. This is what your AI knows about today’s task. Without it, every interaction starts from absolute zero.

Layer 2 (knowledge graph) is what separates productive AI use from impressive demos. This is structured, cross-referenced knowledge that persists between sessions. When your model encounters a problem it solved last month, it should be able to retrieve that solution without you re-typing it. Most teams do not have this.

Layer 3 (ingestion pipeline) is what separates knowledge systems from static ones. This is a structured process for capturing external signals, translating them into structured knowledge, and making them available to the model. Without it, the knowledge graph only knows what you manually fed it. It cannot learn from the world.

The compounding effect is the key. An AI system with all three layers gets smarter over time. Each session adds to the knowledge graph. Each external signal enriches the ingestion pipeline. The model in month six is categorically more capable than the model in month one, not because the model changed but because the memory system accumulated.

Without Layer 2 and 3, your AI is an amnesiac genius. Brilliant for fifty minutes. Blank at the start of the next hour.

The Open Question

The AI industry is racing to build bigger context windows. Bigger scanners. More tokens per session.

But scanning is not knowing. Volume is not structure. And a million-token window that resets every session is still amnesia with better optics.

How much of what your AI learned last month can it access right now, without you re-teaching it?

This fusion emerged from a STEAL on a practitioner’s AI memory stack architecture, which reached 212K views by naming the pain point every AI user recognises: context amnesia.