The Leverage Lie

Naval said code is leverage. He was half right.

The Leverage Lie

You opened a chat window this morning, pasted a brief, waited for the response, edited it, pasted it back, waited again, and called the result “AI leverage.”

It wasn’t.

Familiar Ground

Naval Ravikant’s leverage taxonomy is one of the most cited frameworks in the builder internet. Three types: labor, capital, code. He argued that code and media are the final, most democratic forms because they require no permission. “An army of robots is freely available,” he wrote. “It’s just packed in data centres for heat and space efficiency.”

He was right about code being leverage. What he didn’t anticipate is what the army of robots actually does now. The current generation of AI isn’t code leverage. Code leverage scales identical output: one piece of software serves a million users. What you get from an AI agent is something else entirely. You get differentiated output, each response shaped by the context you provide. That’s closer to labor. And Naval dismissed labor as the worst form of leverage because managing other people is “incredibly messy.” Career conflicts, performance reviews, coordination overhead.

But AI agents don’t have career goals. They don’t need performance management. They don’t get offended by repetitive work. You get labor’s output capacity attached to code’s permissionless accessibility.

George Nurijanian, a product leader and creator of prodmgmt.world, recently named this gap. His observation is sharp: most product managers use AI in a way that misses the leverage entirely. One window, one prompt, wait, read, prompt again. Faster than before, but structurally identical to doing the work yourself.

Counter-Signal

Michael Gerber identified this trap in 1986, decades before AI existed. His book The E-Myth Revisited argues that most small businesses fail because their founders are skilled technicians who end up doing all the work themselves rather than building systems that do the work for them. The baker who opens a bakery and then spends every morning baking. The programmer who starts a company and then writes every line of code.

The trap is not incompetence. It is excellence applied at the wrong altitude.

You are in the technician trap right now. You open a single AI chat. You type a careful prompt. You wait. You read. You edit. You prompt again. You are doing the work, sequentially, personally, one task at a time. The speed is real. The leverage is not.

Speed and leverage are not the same thing. Speed means you do the same work faster. Leverage means the work gets done without you doing it. A faster version of yourself is still just you.

⚛️ The Fusion

Three ideas crash here.

Naval’s taxonomy gives you the categories: labor (people), capital (money), code (software). Each amplifies output. Each has a cost. Labor costs coordination. Capital costs access. Code costs nothing, but produces identical output, the same program running for every user.

Gerber’s E-Myth gives you the failure mode: the technician who works IN the business instead of ON it. The person who does the work instead of designing the system that does the work. Applied to AI: the knowledge worker who prompts the chat instead of orchestrating the agents.

Governed orchestration gives you the missing mechanism. Nurijanian proposes running three agents in parallel, each with a focused brief: one pulls customer research, one drafts the spec, one cross-references the backlog. The user reviews and integrates rather than executes. This is the right direction. But it is incomplete.

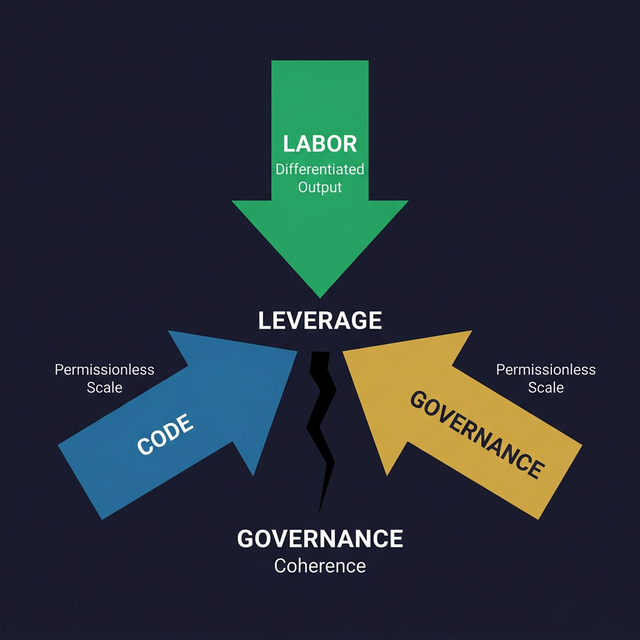

What if you could see the problem as a triangle? Code gives you scale (identical output, infinite copies). Labor gives you diversity (differentiated output, multiple perspectives). And governance gives you coherence (the rules that prevent parallel output from becoming parallel noise).

Parallelism without governance is not leverage. It is three agents producing three mediocre outputs. The plumber who hires three apprentices without a training manual does not have a plumbing business. He has three problems instead of one.

The missing layer is the constitution: what the agents are not allowed to compromise, how conflicts between their outputs are resolved, and what quality looks like before you accept any of it. Without that layer, you are building Gerber’s bakery again, just with robotic bakers. Fast, cheap, and out of control.

| The Technician Trap | Governed Leverage |

|---|---|

| One chat, sequential prompts | Multiple agents, parallel briefs |

| You do the work faster | The system does the work |

| Speed is the metric | Judgment is the bottleneck |

| No quality gate on output | Constitution defines what “done” means |

| Each session starts cold | Reasoning threads accumulate across sessions |

| Output volume = progress | Output coherence = progress |

The New Pattern

The real update to Naval’s framework is not a fourth category. It is the recognition that AI agents sit at the intersection of two existing categories, and that intersection needs a governance layer to work.

Labor without coordination fails. Naval was right about that.

Code without differentiation scales, but produces identical output. Also right.

AI agents give you differentiated output (labor) at permissionless scale (code). But the coordination cost, the thing that makes labor messy, does not disappear. It changes form. Instead of managing people’s motivations, you manage the constitution: the briefs, the boundaries, the quality gates, the conflict resolution protocol.

The bottleneck shifts. In the technician trap, your bottleneck is execution: typing, prompting, reading. In governed leverage, your bottleneck is synthesis and judgment: deciding what matters, integrating perspectives, making tradeoffs. That is the right bottleneck to have. That is where human value lives.

Gerber would call this “working on the business.” Naval would call it “judgment as leverage.” The principle is the same: the person who designs the system captures more value than the person who operates it, no matter how fast the operator moves.

The Open Question

You have access to an army of robots. Naval told you this years ago.

But an army without rules of engagement is not an army. It is a crowd.

What constitution have you written for yours?

This fusion emerged from a STEAL on George Nurijanian’s AI leverage article (@nurijanian, 13 March 2026), grounded against Naval Ravikant’s leverage taxonomy and Michael Gerber’s E-Myth Revisited*.*